Naive Bayes for Nepali News Classification Hello everyone, welcome back to our blog about news classification and in this blog, we are going to explore Naive Bayes for news in our native language Nepali. I started this project nearly a year ago but I never finished it because I did not know anything about it […]

machine learning

Nepali News Classification Using Logistic Regression

Logistic Regression for Nepali News Classification Hello everyone, and welcome back to my news categorization blog. In this blog, I’ll be looking into Logistic Regression for news in Nepali, which is our native language. I started this project almost a year ago but never finished it because I had no idea what I was doing […]

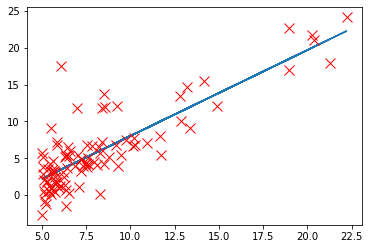

Linear Regression Using Different Gradient Descent

Gradient Descent Gradient Descent is the most popular optimizer to update parameters and it uses the gradient of the error with respect to the parameter. But the parameter update rule is different and thus there are different variants of Gradient Descent. Mini- Batch Gradient Decent It is the simplest algorithm, where we update parameters in […]

Logistic Regression from Scratch in Python: Exploring MSE and Log Loss

Logistic Regression From Scratch Hello everyone, here in this blog we will explore how we could train a logistic regression from scratch. We will start from mathematics and gradually implement small chunks into our code. Import Necessary Module pandas : Working for DataFrame numpy : For array operation matplotlib : For visualization time : function […]

R Exercise: Validation & Cross-validation for Predictive Modeling

Validation & Cross-validation for Predictive Modeling including Linear Model as well as Multi Linear Model Before starting topic, let’s be familier on some term. Validation : An act of confirming something as true or correct. Also, Validation is the process of establishing documentary evidence that a procedure, process, or activity was carried out in testing […]

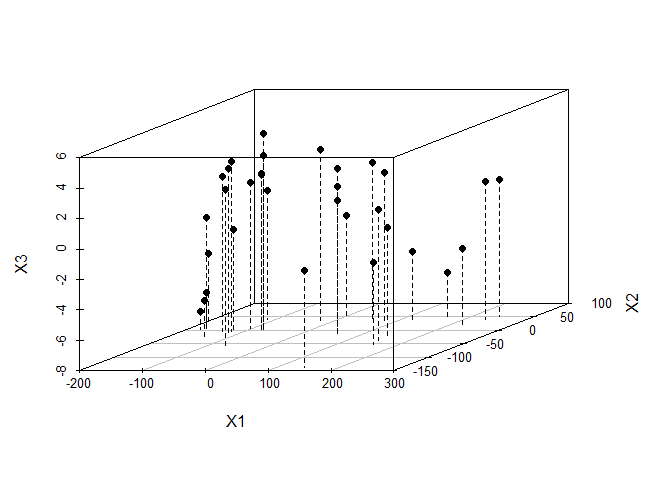

R Exercise: Working with PCA and Dimensionality Reduction

Check the data mtcars with head and save a new data as mtcars.subset after dropping two non-numeric (binary) variables for PCA analysis data <- mtcars head(data) ## mpg cyl disp hp drat wt qsec vs am gear carb ## Mazda RX4 21.0 6 160 110 3.90 2.620 16.46 0 1 4 4 ## Mazda RX4 […]

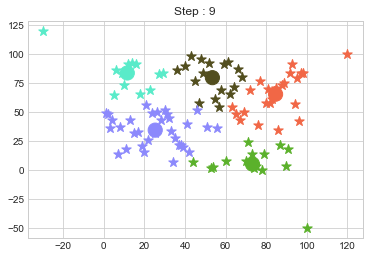

K Medoids Clustering from Scratch in Python

K Medoids Clustering is a clustering algorithm, have you tried to write it from scratch in Python? But before that, if you are also looking for other algorithms from scratch then please follow along: Linear Regression from Scratch Logistic Regression from Scratch Logistic Regression with Different Loss Functions PCA from Scratch K-Means Clustering from Scratch […]

K means Clustering in Python from Scratch

K means Clustering in Python from Scratch Introduction K means clustering is very simple type of unsupervised learning. Which is used to solve clustering problem. Using this algorithm we can easily classify given data point in given numbers of clusters (k). To do so we should first find number of cluster. In k mean cluster […]

Writing a Logistic Regression Class from Scratch

Logistic Regression Logistic Regression is not exactly a regression but it performs a classification. As the name suggests, it uses the logistic function. This notebook is inspired by the github repo of Tarry Singh and I have referenced most of the codes from that repo. Please leave a star on it. Artificial Intelligence Deep Learning […]

Writing a Linear Regression Class from Scratch Using Python

Linear Regression Introduction Before there was any ML algorithms, there was a concept and that was regression. Linear Regression is considered as the process of finding the value or guessing a dependent variable using the number of independent variables. Take for a example:- predicting a price of house using variables like, size of house, age […]